US regulators are increasingly scrutinising AI companies over the potential negative impacts of chatbots

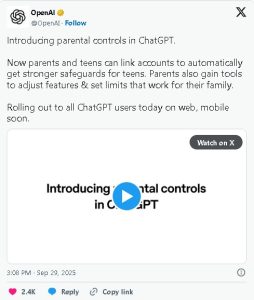

OpenAI is rolling out parental controls for ChatGPT on the web and mobile on Monday, following a lawsuit by the parents of a teen who died by suicide after the artificial intelligence startup’s chatbot allegedly coached him on methods of self-harm.

The controls let parents and teenagers opt in for stronger safeguards by linking their accounts, where one party sends an invitation and parental controls activate only if the other accepts, the company said.

US regulators are increasingly scrutinising AI companies over the potential negative impacts of chatbots. In August, Reuters had reported how Meta’s, opens new tab AI rules allowed flirty conversations with kids.

Under the new measures, parents will be able to reduce exposure to sensitive content, control whether ChatGPT remembers past chats, and decide if conversations can be used to train OpenAI’s models, the Microsoft-backed company said on X.

OpenAI said parents can set quiet hours to block access at certain times and disable voice mode, along with image generation and editing. However, parents will not have access to a teen’s chat transcripts, the company added.

OpenAI said its systems and trained reviewers may notify parents in rare cases of serious safety risks, sharing only the information needed to protect the teen. OpenAI added that it will inform parents if a teen unlinks the accounts.

AI, which has about 700 million weekly active users for its ChatGPT products, is building an age prediction system to help it predict whether a user is under 18 so that the chatbot can automatically apply teen-appropriate settings.

Meta had also announced new teenager safeguards to its AI products last month. The company said it will train systems to avoid flirty conversations and discussions of self-harm or suicide with minors and temporarily restrict access to certain AI characters.